Unfortunately, these commands are unable to participate in usage requirements, and therefore would fail to use propagated compiler flags or definitions. In previous versions of CMake, building CUDA code required commands such as cuda_add_library. Information such as include directories, compiler defines, and compiler options can be associated with targets so that this information propagates to consumers automatically through target_link_libraries. Usage requirements are at the core of modern CMake. Let’s be a little more adventurous and also generate a static library that is used by an executable. The first thing that everybody does when learning CMake is write a toy example like this one that generates a single executable. Now that CMake has determined what languages the project needs and has configured its internal infrastructure we can go ahead and write some real CMake code. (Debug, Release, RelWithDebInfo, and MinSizeRel). When CUDA is enabled, CMake provides default flags for each configuration This results in generation of the common cache language flags that Figure 3 shows. This lets CMake identify and verify the compilers it needs, and cache the results. Next, on line 2 is the project command which sets the project name ( cmake_and_cuda) and defines the required languages (C++ and CUDA). For example, to use the static CUDA runtime library, set it to –cudart static. # so that the static cuda runtime can find it at runtime.īUILD_RPATH $. # We need to add the path to the driver (libcuda.dylib) as an rpath, Target_link_libraries(particle_test PRIVATE particles) PROPERTIES CUDA_SEPARABLE_COMPILATION ON) # could be called by other libraries and executables # particle library to be built with -dc as the member functions # We need to explicitly state that we need all CUDA files in the Target_compile_features(particles PUBLIC cxx_std_11) # particles will also build with -std=c++11

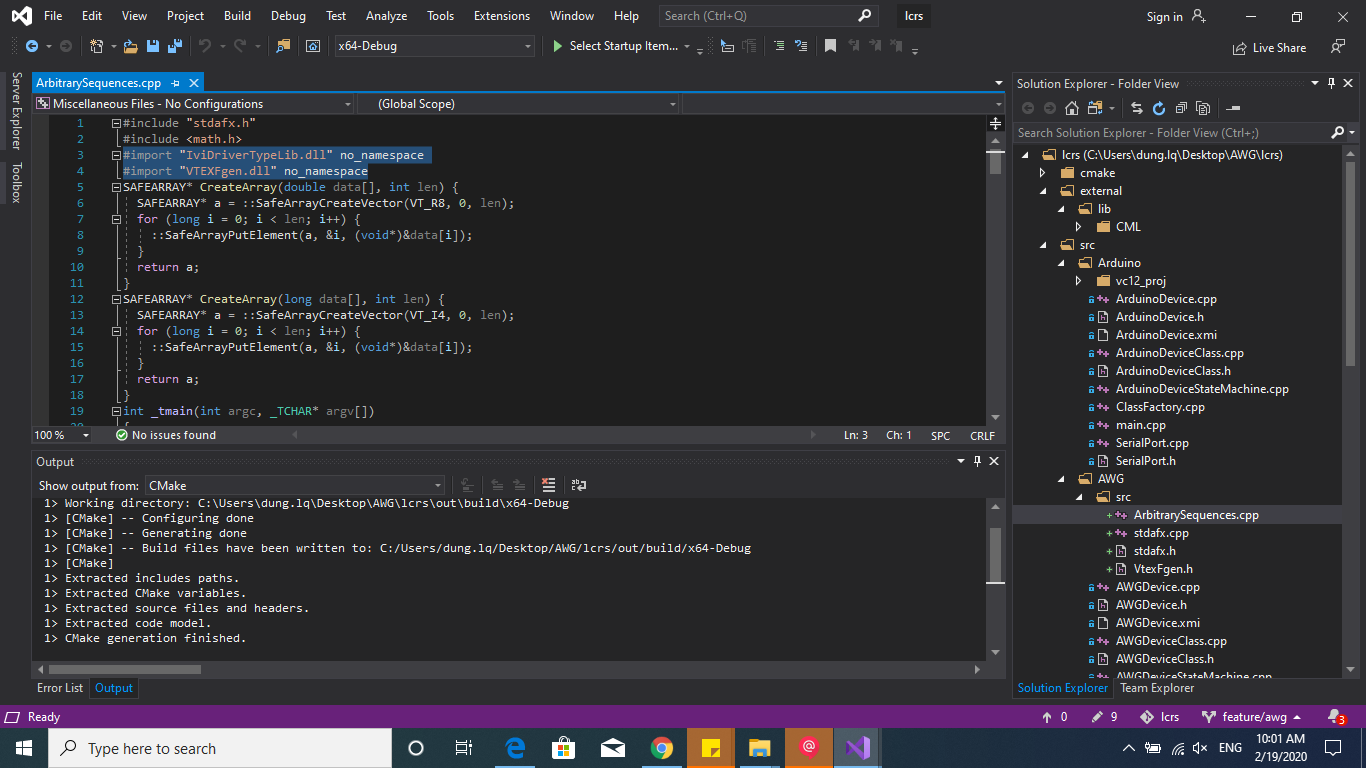

# As this is a public compile feature anything that links to # Request that particles be built with -std=c++11 Project(cmake_and_cuda LANGUAGES CXX CUDA) cmake_minimum_required(VERSION 3.8 FATAL_ERROR) I have provided the full code for this example on Github. Listing 1 shows the CMake file for a CUDA example called “particles”. Let’s start with an example of building CUDA with CMake. CUDA now joins the wide range of languages, platforms, compilers, and IDEs that CMake supports, as Figure 1 shows. CMake 3.8 makes CUDA C++ an intrinsically supported language. Since 2009, CMake (starting with 2.8.0) has provided the ability to compile CUDA code through custom commands such as cuda_add_executable, and cuda_add_library provided by the FindCUDA package. In this post I want to show you how easy it is to build CUDA applications using the features of CMake 3.8+ (3.9 for MSVC support). CMake adds CUDA C++ to its long list of supported programming languages. The suite of CMake tools were created by Kitware in response to the need for a powerful, cross-platform build environment for open-source projects such as ITK and VTK. CMake generates native makefiles and workspaces that can be used in the compiler environment of your choice. Many developers use CMake to control their software compilation process using simple platform- and compiler-independent configuration files.

How do you target multiple platforms without maintaining multiple platform-specific build scripts, projects, or makefiles? What if you need to build CUDA code as part of the process? CMake is an open-source, cross-platform family of tools designed to build, test and package software across different platforms.

Cross-platform software development poses a number of challenges to your application’s build process.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed